Hello everyone,

I am currently researching LoRaWAN as part of my PhD and having started only 6 months ago I’m still wrapping my head around many a thing.

Talking about duty cycle limitations, and ignoring for the moment the fair access policy of TTN, as I understand both sub-bands (g1 and g band) have a duty cycle of 1%. I am using the TTN hardware (gateway and UNO) and using the standard frequencies of the EU data plan: 868.1, 868.3, 868.5, 867.1, 867.3, 867.5, 867.7, 867.9MHz.

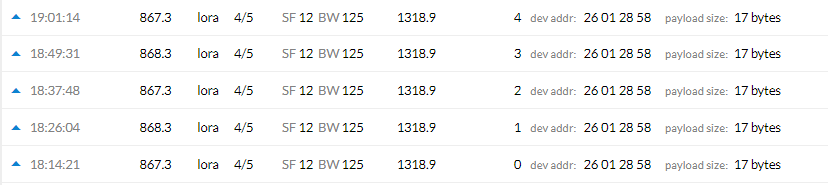

Trying to understand the limitations further I set up my two nodes to transmit 4 bytes of payload (+13 of header) in SF12BW125 which gives me a time in air of 1318.9ms in the TTN console.

If the duty cycle is enforced per sub-band, having 2 sub-bands in g and g1 should effectively half the time of silence between two transmissions sent from the same device.

According to calculations a time in air of 1318.9ms gives me, with a 2% duty cycle a time of silence required of about 64.6 seconds with 1318.9*(1/(2/100) - 1).

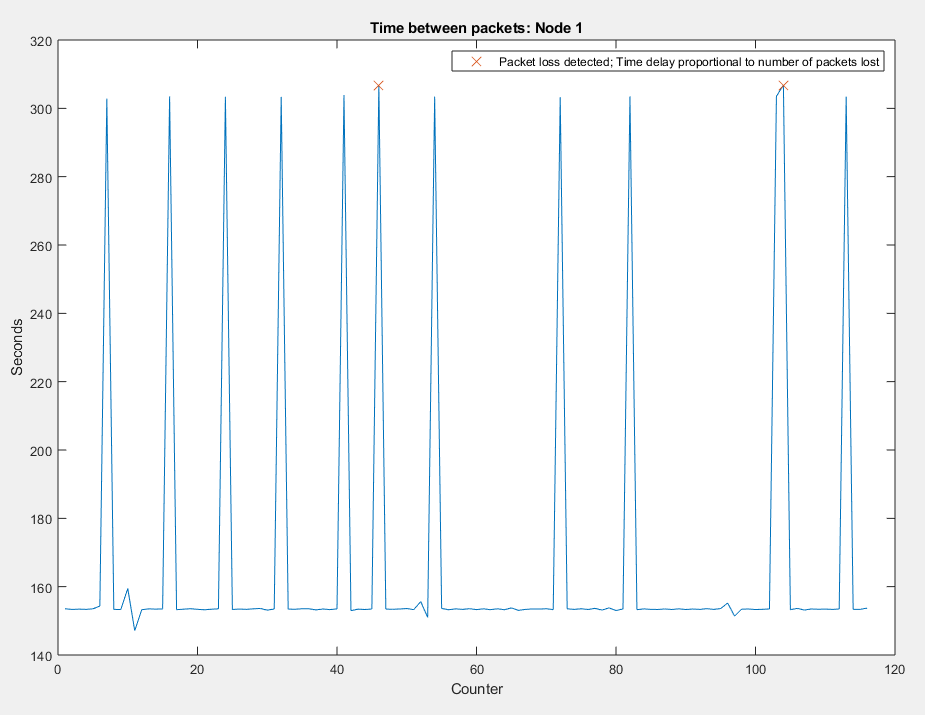

Setting my transmission interval to 150s (which should be plenty of silence time) and plotting the time between received packets against their counter value, this happens:

Some packets show to have been delayed twice as much as they should have (300s instead of 150s) without their counter being increased. This, together with examining the arduino serial feed brings me to the conclusion that those were packets that failed to send because of no_free_channel. In the above, only two packets are flagged as missing because of the difference between their counters is greater than 1 and their time delay is increased to 300s as expected.

Why is this happening? What am I getting wrong about duty cycles?

Even with a 1% duty cycle, so ignoring the fact that channel hopping should change sub-band between transmissions (I think), the time of rest needed to stay within regulations should not be greater than 150s.

Thank you very much!